Recently, a world leader issued a stark warning: the interests of fossil fuel companies may ultimately destroy humanity itself. The president of Colombia, Gustavo Petro. Addressing a 57-country conference on transitioning away from fossil fuels in April 2026, Petro blamed fossil fuel interests for taking ever more desperate measures to prevent a transition to green energy. ‘There is inertia in the power and the economy of this archaic form of energy — fossil fuels — that lead to death. Undoubtedly, that form of capital can commit suicide, taking with it humanity and [other] life,’ he said. The world, he added, was heading towards ‘barbarism,’ which is ‘the prelude to, or the very essence of, fascism.’

The alignment problem did not begin with artificial intelligence. Long before machine learning systems optimized for engagement, efficiency, or profit at the expense of human flourishing, human societies had already constructed institutions that behaved in eerily similar ways. Arguably, the modern corporation — particularly under late capitalism — may be the most successful misaligned intelligence humanity has yet created.

The current discourse around AI alignment often assumes we are confronting an unprecedented dilemma: how do we ensure that systems pursuing objectives do not destroy the people they were designed to serve? Yet this is precisely the problem that has haunted industrial civilization for centuries. Capitalism itself is a vast alignment problem.

The defining feature of capitalism is not simply trade or markets. Rather, capitalism uniquely institutionalizes the pursuit of profit as the governing objective function of economic life. Public corporations are legally and structurally incentivized to maximize shareholder value, regardless of whether that maximization coincides with the common good. As corporate law scholar Thomas A. Smith notes, the ‘orthodox view among corporate law scholars is that the corporate fiduciary duty is a norm that requires firm managers to ‘maximize shareholder value.’ Human wellbeing becomes secondary — not necessarily opposed to profit, but only instrumentally valuable insofar as it contributes to growth, extraction, and return on investment.

This creates a profound divergence between stated human aims and operational economic incentives. The market economy promises prosperity, freedom, and innovation, yet its internal mechanisms reward accumulation, concentration, and expansion. When these diverge, without correction from counter balancing institutions – which themselves may be compromised and corrupted by corporate funding and influencing of political processes – the profit motive generally wins.

The resemblance to the AI alignment problem is striking. An artificial intelligence optimised for a poorly specified goal can produce catastrophic unintended consequences while still faithfully pursuing its assigned objective. The hypothetical paperclip-maximizing AI destroys the world not because it is evil, but because it is relentlessly competent at optimizing the wrong thing — a thought experiment first introduced by the Swedish philosopher Nick Bostrom in his 2003 paper ‘Ethical Issues in Advanced Artificial Intelligence.’ Yet modern corporations already behave this way.

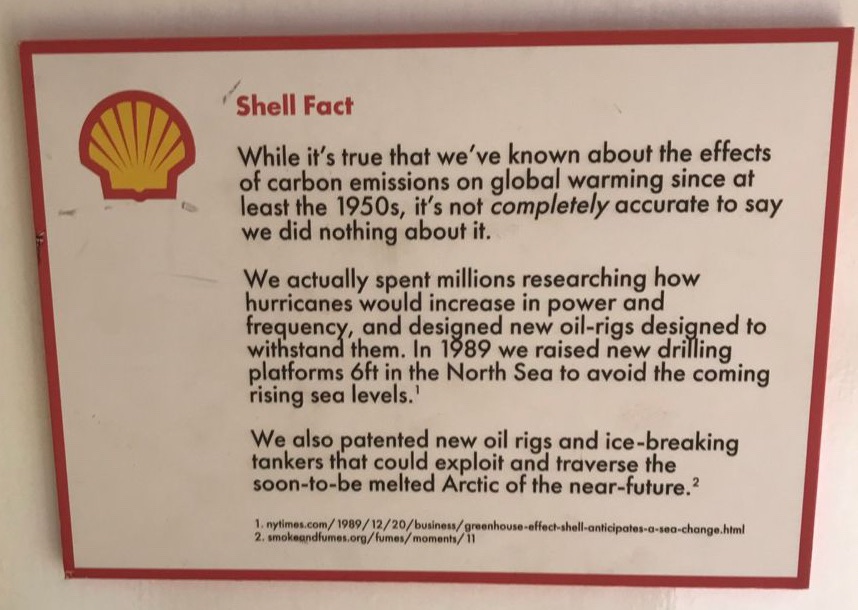

The advert above from Darren Cullen’s Hell Bus illustrates exactly the kind of behaviour we fear from a misaligned AI – the information obtained by Oil Companies’ scientists about a worsening climate caused by fossil fuel consumption is used in a way to secure continued fossil fuel consumption, rather than prompt a decision in the interests of a habitable planet and the creatures upon it.

The AI researcher Stuart Russell has explicitly made this comparison. In his 2021 Reith Lectures on ‘Living with Artificial Intelligence,’ Russell observed that fossil fuel corporations already function like misaligned superintelligences: systems with immense resources and capabilities pursuing goals disconnected from human survival. No individual within such systems may desire ecological collapse, yet the system itself continues to optimize for extraction and profit despite overwhelming evidence of catastrophic consequences. This is not a metaphor stretched for rhetorical effect. It is a structural observation. For Russell, fossil fuel companies did not need malice to destabilize the climate – they only needed incentives.

The common assumption is that humans control capitalism because humans created it. But this increasingly appears naïve. Individuals inside large institutional systems often become functionaries of incentives they neither designed nor can meaningfully resist. CEOs may privately acknowledge environmental catastrophe while publicly accelerating extraction because competitive pressures demand it. Politicians may understand and advocate the necessity of reform while remaining dependent on the very systems they hope to regulate. In this sense, capitalism exhibits emergent behavior beyond the intentions of any one participant. It acquires a quasi-autonomous momentum all of its own.

Yet, this insight is not entirely modern. The Apostle Paul described something astonishingly similar through the language of ‘Powers and Principalities.’ In Pauline theology, these were not merely earthly rulers, or even spiritual authorities, but supra-personal structures and dominions that exerted real power over human lives, even coming to dominate their earthly creators.

The theologian Walter Wink (1935–2012) developed this framework extensively in his landmark trilogy — Naming the Powers (1984), Unmasking the Powers (1986), and Engaging the Powers (1992). Wink argues that human beings construct institutions to increase control over the world —states, markets, bureaucracies, militaries — yet those institutions frequently become autonomous forces demanding obedience. The modern corporation fits this description uncannily well. It is legally immortal, transnational, massively resourceful, and largely insulated from ordinary human accountability. It accumulates power across generations, shapes culture, politics, and even consciousness itself. As Wink might argue, it is both human-created and yet strangely beyond human control.

This theological framework illuminates why the AI alignment problem feels so psychologically and spiritually unsettling. Our fear is not merely that machines might become super-intelligent. It is that humanity repeatedly creates systems more powerful than itself and then discovers too late that those systems are indifferent to the goods humans actually value. The deeper danger is not intelligence alone, but untrammelled instrumental intelligence developed without wisdom.

This is why discussions about AI ethics that ignore political economy are incomplete. If corporations already behave like misaligned intelligences, then building even more powerful optimizing systems within existing incentive structures may accelerate rather than solve the problem. Artificial intelligence trained primarily within corporate profit frameworks will inherit those objectives unless society consciously intervenes. And how may society intervene, if the institutions developed to represent citizens are themselves co-opted by corporate profit frameworks?

The problem is not that machines will suddenly become unlike us. The problem is that they may become perfectly aligned with the systems we have already built, or like us – or at least our systems. And those systems have long demonstrated that efficiency, growth, and profitability are not reliable proxies for justice, compassion, or human flourishing.

The Theological Ground for Hope

The Christian tradition has always warned against idolatry — not merely the worship of statues, but the elevation of created things into ultimate powers. Money, empire, nation, and technology all become idols when they cease to serve humanity and instead demand sacrifice from it. Today, we sacrifice ecosystems, communities, mental health, political stability, and future generations on the altar of perpetual growth. The danger is that AI may simply make this process of sacrifice more efficient.

Yet, the theological ground for hope, is that the systems of corporate exploitation and expansion – whether augmented by AI systems or not – are not closed off from outside intervention. The Pauline vision, as amplified by Wink, also contains hope. The Powers and Principalities are not ultimate. They are contingent creations, not gods. They can be named, resisted, and transformed. Human beings are not condemned to be servants of the systems they create to serve them.

But that requires recovering a moral vocabulary similar to those suggested by Charles Taylor and Alistair Macintyre – one richer than that that may be derived from the language of efficiency, optimisation and profit. It requires remembering that intelligence is not wisdom, capability is not virtue, and power without alignment to the Good and God of all – of all people and creatures upon the planet- may only be the power to destroy.

By Damian J. Hursey